Meta is set to introduce its community notes feature, modeled after X's open - source system, enabling community contributions to fact - check posts. While this approach aims to mitigate bias through diverse opinions, skepticism exists regarding its effectiveness in curbing misinformation, given that previous studies indicate high rejection rates of community notes on X. The moderation model raises concerns over potential biases due to organized contributor groups manipulating the visibility of certain posts. Though a sizeable contributor base has signed up, the initial phase will limit note visibility, likely limiting immediate impact on the overall post landscape. Meta's community notes system borrows from X's framework, relying on user - generated contributions for content moderation, potentially increasing visibility for some misleading posts.

1. Introduction

2. What are Community Notes?

The notes are limited to 500 characters and must include a link to a reliable source to back up the information provided. This ensures that the added context is based on verifiable data.

3. How Does it Work?

3.1 Becoming a Contributor

In the United States, Meta has already seen around 200,000 potential contributors sign up across Facebook, Instagram, and Threads. However, the initial roll - out will not display notes on content immediately. Meta intends to test and refine the system before making the notes visible to all users.

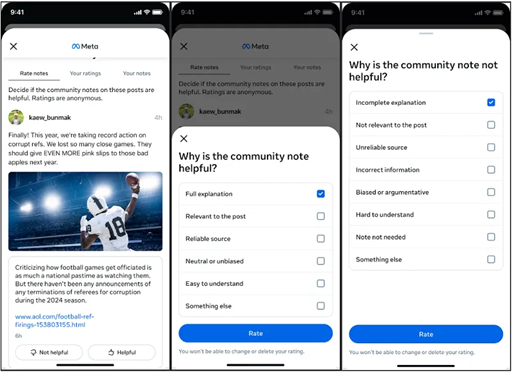

3.2 Adding and Evaluating Notes

Meta uses an algorithm that takes into account the ratings and feedback from contributors with diverse perspectives. This helps in preventing bias and ensuring that the notes that are displayed are objective and useful. For example, if a group of contributors with different political leanings all find a note helpful, it is more likely to be shown to the general public.

3.3 Displaying the Notes

The way the notes are presented is designed to be non - intrusive. They are not meant to overshadow the original post but rather to offer users the option to access more information if they so choose.

4. Meta's Rationale for Introducing Community Notes

4.1 Addressing the Shortcomings of Third - Party Fact - Checking

For example, different fact - checking organizations might have their own editorial biases, which could lead to inconsistent fact - checking. Also, with the vast amount of content being posted on Meta's platforms every day, the third - party systems struggled to keep up, leaving much misinformation unaddressed.

4.2 Promoting User Engagement and Responsibility

This also gives a sense of ownership to the users, as they can directly contribute to making the platform a more reliable source of information. For instance, a user who is passionate about a particular topic can use their knowledge to add accurate and useful notes to relevant posts, thereby helping others in the community.

4.3 Meeting the Challenge of Misinformation

Research has shown that misinformation can have a significant impact on public opinion and decision - making. By enabling the community to fact - check and add context, Meta hopes to counter this trend and create a more informed user base.

5. Similarities and Differences with X's System

5.1 Similarities

Also, both systems require a consensus of sorts. On X, and now on Meta's platforms, notes need to be approved by a diverse range of users to be displayed. This helps in ensuring that the notes are objective and not influenced by a single group's biases.

5.2 Differences

Another difference might lie in the user base and the type of content that is typically shared on each platform. Facebook and Instagram have a more diverse user base in terms of age, location, and interests compared to X. This could potentially lead to different types of notes being added and different challenges in terms of ensuring the accuracy and relevance of the notes.

6. Challenges and Concerns

6.1 Risk of Manipulation

Meta will need to implement strict anti - manipulation measures. This could include using advanced algorithms to detect coordinated voting patterns and having a team to review flagged notes for signs of manipulation.

6.2 Polarization and Bias

For example, on a controversial political topic, contributors from different political ideologies might have such strong opinions that they are unable to reach a consensus. Meta will need to find ways to encourage more open - mindedness and civil discourse among contributors.

6.3 Effectiveness Against AI - Generated Misinformation

Meta will need to invest in research and development to find ways to train contributors to identify and fact - check AI - generated misinformation. Additionally, the company might need to develop new technological tools to assist in the detection and debunking of such content.

6.4 Adoption and User Trust

Meta will need to actively promote the feature and educate users about how it works. Demonstrating the accuracy and usefulness of the notes through case studies and user testimonials could help in building trust among the user base.

7. The Future of Community Notes

7.1 Expansion Plans

This expansion will require careful consideration of cultural, linguistic, and regulatory differences in different regions. Meta will need to ensure that the system is adapted to the specific needs and characteristics of each market.

7.2 Integration with Other Features

Integration with advertising could also be explored, although currently, contributors are not allowed to submit notes on advertisements. In the future, this might change, allowing users to fact - check and add context to potentially misleading ads.

7.3 Impact on Content Moderation

This could reduce the burden on Meta's internal moderation teams and shift more of the responsibility to the users. However, it also means that Meta will need to continuously monitor and support the system to ensure its integrity.

If successful, Community Notes could not only improve the quality of information on Meta's platforms but also set a new standard for content moderation in the social media industry. As the system evolves and expands, it will be interesting to see how it impacts user behavior, the spread of misinformation, and the overall user experience on Facebook and Instagram.